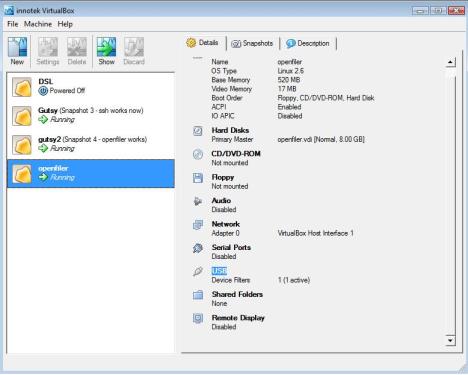

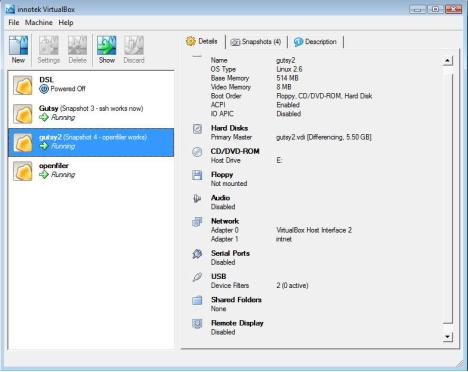

Birds eye view of the end configuration...

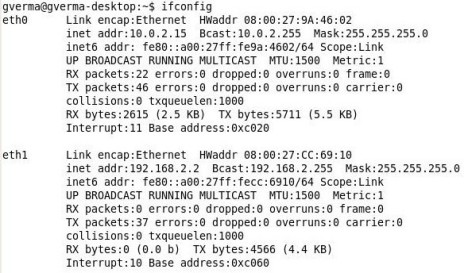

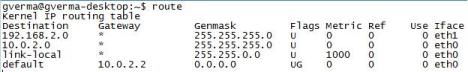

Here is the bird's eye view of the end configuration that we will achieve at the end of this article:

Click here for a bigger view..

My Grumblings with openfiler..

Those of you who have started experimented with openfiler may have started liking its features already. One of the biggest concerns that I have with openfiler is that it's administrative GUI is full of bugs or makes a lot of assumptions while working.

For example, it wouldn't show up logical volumes which were created in a iscsi external hard disk from another installation of openfiler virtual machine.

Somehow, CLI commands like pvscan, lvscan and vgscan are able to discover previously created physcial volumes, logical volumes and volume groups; but the front end GUI (http://<openfiler IP>:446) fails to do the same.

Although there is another open source product called freenas, I resisted to temptation to switch my loyalties too soon as no product is without bugs as such.

My requirement..

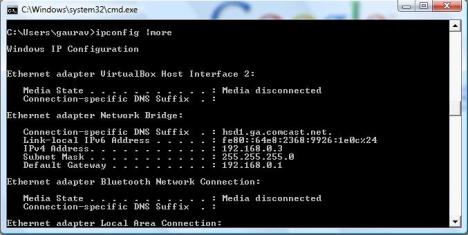

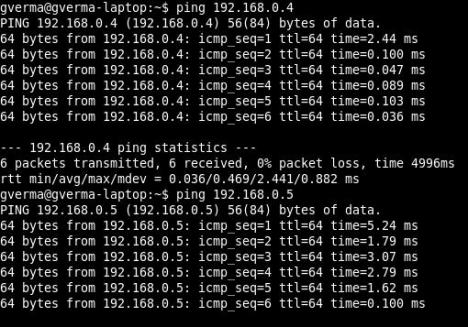

Anyways, my real requirement was to create a homegrown 10g RAC cluster using virtualbox virtual machines. For better or worse, I had chosen Suse Linux 9 (SP3) as the base operating system for the 10g RAC installation. A great reason was that many big customers like officedepot have chosen to implement 10g RAC on Suse Linux 9.3 . As time goes by, I feel that SuSE linux will become a more popular platform. Hence my persistence with this distribution.

There are ways to spoof the 10g RAC installation with 1 node too, but I wanted to simulate the real thing and be able to drive a Train-The-Trainer session for my teammates.

Looking back at my initial struggles...

I now realize that figuring out how to discover iscsi targets on Ubuntu was much easier. The experience is documented here: Combining Openfiler and Virtualbox (Ubuntu guest OS on windows host

My initial struggles were torn with anguish, especially because I realized very soon that I could not use open-iscsi linux package with SuSE linux 9 (2.6.5-7-244 kernel) at all. SuSE linux 10.x seems to have great support for it, though. This is simply because open-iscsi package works with kernels 2.6.14 and above only.

Tough luck there.

So, whats available for SuSE Linux 9 if you want to discover iscsi target devices?

Well, there are some options. The linux-iscsi package is very much available and with a little configuration, which is quite simplistic, it works great. A lot of people tried to woo me with other distributions like Oracle Enterprise Linux 5, which has iscsi-initiator-utils package built into it, but I stuck to my ground.

Here are some important distinctions between linux-iscsi and open-iscsi:

- The linux-iscsi package (aka iscsi-sfnet) reads /etc/iscsi.conf

- The open-iscsi package reads /etc/iscsid.conf. This package has an additional iscsiadm utility for discovering targets.

As of now, linux-iscsi and open-iscsi projects have been merged (as from their announcement) into one open-iscsi project.

Now, the difficult part: figuring out the setup ..

The most difficult part was figuring out the setup that worked. Eventually, after umpteen tries, it did work. On more than one occasion, I thought if it was even worth trying linux-iscsi initiator package with openfiler as iscsi target, iscsi-target drivers seemed more compatible with open-iscsi initiator package (this was the Ubuntu experience dominating my thinking).

However, I now realize that this perception was delusional. All I really needed was to a proper configuration of linux-iscsi package as iscsi initiator.

i will assume that the reader is conversant with the terms iscsi iniatiator/target.

If not, here is a crash course: iscsi targets are the LUNs or logical volumes in your NAS device , iscsi initiator is the client machine which wants to use these LUNs or Logical volumes. You dig?

With debug level 10 of iscsid process (# iscsid -d 10 &), i was getting the following error while discovering targets:

.. >> iscsid[17946]: connecting to 10.143.213.233:446

.. >> iscsid[17946]: connected local port 33785 to 10.143.213.233:446

.. >> iscsid[17946]: discovery session to 10.143.213.233:446 starting iSCSI login on fd 1

.. >> iscsid[17946]: sending login PDU with current stage 1, next stage 3, transit 0x80, isid 0x00023d000001

.. >> iscsid[17946]: > InitiatorName=iqn.1987-05.com.cisco:01.51f06557c68

.. >> iscsid[17946]: > InitiatorAlias=raclinux1

.. >> iscsid[17946]: > SessionType=Discovery

.. >> iscsid[17946]: > HeaderDigest=None

.. >> iscsid[17946]: > DataDigest=None

.. >> iscsid[17946]: > MaxRecvDataSegmentLength=8192

.. >> iscsid[17946]: > X-com.cisco.PingTimeout=5

.. >> iscsid[17946]: > X-com.cisco.sendAsyncText=Yes

.. >> iscsid[17946]: > X-com.cisco.protocol=draft20

.. >> iscsid[17946]: wrote 48 bytes of PDU header

.. >> iscsid[17946]: wrote 248 bytes of PDU data

.. >> iscsid[17946]: socket 1 closed by target

.. >> iscsid[17946]: login I/O error, failed to receive a PDU

.. >> iscsid[17946]: retrying discovery login to 10.143.213.233

.. >> iscsid[17946]: disconnecting session 0x80b4890, fd 1

.. >> iscsid[17946]: discovery session to 10.143.213.233:446 sleeping for 2 seconds before next login attemptI saw light at the end of the tunnel after trying a simple setup mentioned in http://www-941.ibm.com/collaboration/wiki/display/LinuxP/iSCSI

Lets talk about the experience in more detail now.

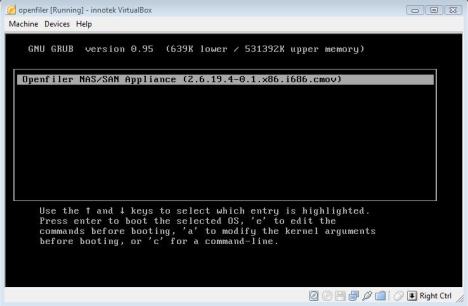

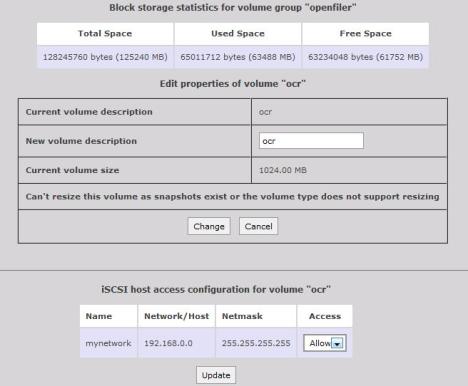

The setup on iscsi target (Openfiler) side..

[root@openfiler~]# uname -a Linux openfiler.usdhcp.example.com 2.6.19.4-0.1.x86.i686.cmov #1 ..

I did not setup a network or subnet of allowed initiators for LUNs (as

can be seen here that the /etc/initiators.allow and /etc/initiators.deny files are

non-existent):

[root@openfiler~]# ls /etc/initiators.allow ls: /etc/initiators.allow: No such file or directory [root@openfiler~]# ls /etc/initiators.deny ls: /etc/initiators.deny: No such file or directory [root@openfiler~]# more /etc/ietd.conf Target iqn.2006-01.com.openfiler:openfiler.testLun0Path=/dev/openfiler/test,Type=fileio [root@openfiler~]# service iscsi-target status ietd (pid 4164) is running...

Checking if the device drivers are loaded:

[root@openfiler~]# lsmod | grep scsi iscsi_trgt 61788 4 scsi_mod 111756 2 sd_mod,usb_storage

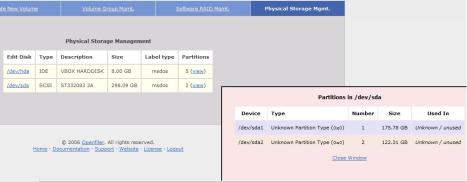

Checking if the NAS device is discovered:

[root@openfiler~]# more /proc/scsi/scsi Attached devices: Host: scsi0 Channel: 00 Id: 00 Lun: 00 Vendor: ST332083 Model: 3A Rev: 3.AA Type: Direct-Access ANSI SCSI revision: 02

Checking what logical volumes have been discovered:

[root@openfiler~]# cat /proc/net/iet/session tid:1 name:iqn.2006-01.com.openfiler:openfiler.test

Discover the volume groups, logical volumes and physical volumes:

[root@openfiler~]# vgscan Reading all physical volumes. This may take a while... Found volume group "openfiler" using metadata type lvm2 [root@openfiler~]# lvscan ACTIVE '/dev/openfiler/ocr' [1.00 GB] inherit ACTIVE '/dev/openfiler/vote' [1.00 GB] inherit ACTIVE '/dev/openfiler/asm' [60.00 GB] inherit ACTIVE '/dev/openfiler/test' [32.00 MB] inherit [root@openfiler~]# pvscan PV /dev/sda2 VG openfiler lvm2 [122.30 GB / 60.27 GB free] Total: 1 [122.30 GB] / in use: 1 [122.30 GB] / in no VG: 0 [0 ]

As you can see, there are actually three more logical volumes that were discovered than

what we have configured in /etc/ietd.conf. We will deal with this later:

[root@openfiler~]# ls -l /dev/openfiler total 0 lrwxrwxrwx 1 root root 25 Apr 24 09:58 asm -> /dev/mapper/openfiler-asm lrwxrwxrwx 1 root root 25 Apr 24 09:58 ocr -> /dev/mapper/openfiler-ocr lrwxrwxrwx 1 root root 26 Apr 24 12:07 test -> /dev/mapper/openfiler-test lrwxrwxrwx 1 root root 26 Apr 24 09:59 vote -> /dev/mapper/openfiler-vote

The real deal-iscsi Initiator setup using linux-iscsi package on Suse Linux 9.3

raclinux1:~ # uname -a Linux raclinux1 2.6.5-7.244-default #1 Mon Dec 12 18:32:25 UTC 2005 i686 i686 i386 GNU/Linux

Make sure the linux-iscsi package is installed:

raclinux1:/etc # rpm -qa | grep linux-iscsi linux-iscsi-4.0.1-98

Show the discovered iscsi devices as of yet:

raclinux1:/etc # iscsi-ls ############################################################################### iSCSI driver is not loaded ###############################################################################

Since the iscsi driver is missing, Load the iscsi driver (which is also known as the iscsi-Sfnet driver)

raclinux1:/etc # modprobe iscsi

Verify that the iscsi driver was loaded:

raclinux1:/etc # lsmod | grep scsi iscsi 182192 0 scsi_mod 112972 5 iscsi,sg,st,sd_mod,sr_mod raclinux1:/etc #

Check what devices have been configured. Right now, no iscsi devices have been discovered:

raclinux1:/etc # iscsi-ls ******************************************************************************* Cisco iSCSI Driver Version ... 4.0.198 ( 21-May-2004 ) ******************************************************************************* raclinux1:/etc #

Configure the /etc/iscsi.conf file for linux-iscsi - the most simplistic case -- This is SO the key.

Trivia

Initially, I had given port 446 in the DiscoveryAddress too and that was causing a very cryptic 'login I/O error, failed to receive a PDU' error.

I had searched all over the internet to resolve this error, including openfiler forums, only to find out that a few people resolved this by doing a firmware upgrade! Unfortunately, there is very little literature on the internet on this error. That is why I hope this article helps someone out there facing the same situation.

raclinux1:~ # more /etc/iscsi.conf # this is the IP of the openfiler iscsi target machine DiscoveryAddress=10.143.213.233

Verify that we have a unique IQN name for the initiator node (SuSE Linux 9.3):

raclinux1:~ # more /etc/initiatorname.iscsi ## DO NOT EDIT OR REMOVE THIS FILE! ## If you remove this file, the iSCSI daemon will not start. ## If you change the InitiatorName, existing access control lists ## may reject this initiator. The InitiatorName must be unique ## for each iSCSI initiator. Do NOT duplicate iSCSI InitiatorNames. InitiatorName=iqn.1987-05.com.cisco:01.51f06557c68

Now, start up iscsid process with a high debug level to see what goes on behind the scenes.

I chose debug level 10 for no particular reason:

raclinux1:/etc # iscsid -d 10 & [1] 30332 raclinux1:/etc # 1209056895.780916 >> iscsid[30332]: iSCSI debug level 10 1209056895.781428 >> iscsid[30332]: InitiatorName=iqn.1987-05.com.cisco:01.51f06557c68 1209056895.781790 >> iscsid[30332]: InitiatorAlias=raclinux1 1209056895.782101 >> iscsid[30332]: version 4.0.198 ( 21-May-2004) 1209056895.785327 >> iscsid[30333]: pid file fd 0 1209056895.785694 >> iscsid[30333]: locked pid file /var/run/iscsid.pid 1209056895.795251 >> iscsid[30333]: updating config 0xbfffeb10 from /etc/iscsi.conf ... .... 1209056895.799724 >> iscsid[30334]: sendtargets discovery process 0x80a80c0 starting, address 10.143.213.233:3260, continuous 1 1209056895.800315 >> iscsid[30334]: sendtargets discovery process 0x80a80c0 to 10.143.213.233:3260 using isid 0x00023d0000011209056895.802181 >> iscsid[30334]: connecting to 10.143.213.233:3260 1209056895.803657 >> iscsid[30334]: connected local port 34261 to 10.143.213.233:3260 1209056895.804189 >> iscsid[30334]: discovery session to 10.143.213.233:3260 starting iSCSI login on fd 1 1209056895.805081 >> iscsid[30334]: sending login PDU with current stage 1, next stage 3, transit 0x80, isid 0x00023d000001 1209056895.805415 >> iscsid[30334]: > InitiatorName=iqn.1987-05.com.cisco:01.51f06557c68 1209056895.805807 >> iscsid[30334]: > InitiatorAlias=raclinux1 1209056895.806120 >> iscsid[30334]: > SessionType=Discovery 1209056895.806535 >> iscsid[30334]: > HeaderDigest=None 1209056895.806918 >> iscsid[30334]: > DataDigest=None 1209056895.807213 >> iscsid[30334]: > MaxRecvDataSegmentLength=8192 1209056895.807515 >> iscsid[30334]: > X-com.cisco.PingTimeout=5 1209056895.807910 >> iscsid[30334]: > X-com.cisco.sendAsyncText=Yes 1209056895.808217 >> iscsid[30334]: > X-com.cisco.protocol=draft20 1209056895.808555 >> iscsid[30334]: wrote 48 bytes of PDU header 1209056895.809044 >> iscsid[30334]: wrote 248 bytes of PDU data 1209056895.810896 >> iscsid[30333]: done starting discovery processes ... ... 1209056895.825881 >> iscsid[30334]: discovery login success to 10.143.213.233 1209056895.800928 >> iscsid[30334]: resolved 10.143.213.233 to 10.4294967183.4294967253.4294967273 ... ... TargetName=iqn.2006-01.com.openfiler:openfiler.test 1209056895.831110 >> iscsid[30334]: > TargetAddress=10.143.213.233:3260,1 1209056895.831416 >> iscsid[30334]: discovery session to 10.143.213.233:3260 received text response, 88 data bytes, ttt 0xffffffff, final 0x80 ... ... 1209056895.849821 >> iscsid[30333]: mkdir /var/lib 1209056895.850134 >> iscsid[30333]: mkdir /var/lib/iscsi 1209056895.850439 >> iscsid[30333]: opened bindings file /var/lib/iscsi/bindings 1209056895.850769 >> iscsid[30333]: locked bindings file /var/lib/iscsi/bindings 1209056895.851143 >> iscsid[30333]: scanning bindings file for 1 unbound sessions 1209056895.851580 >> iscsid[30333]: iSCSI bus 0 target 0 bound to session #1 to iqn.2006-01.com.openfiler:openfiler.test 1209056895.851906 >> iscsid[30333]: done scanning bindings file at line 11 1209056895.852320 >> iscsid[30333]: unlocked bindings file /var/lib/iscsi/bindings

Voila! A new virtual disk is discovered!

Paydirt! The iscsi targets are detected as per messages in /var/log/messages

iSCSI: 4.0.188.26 ( 21-May-2004) built for Linux 2.6.5-7.244-default

iSCSI: will translate deferred sense to current sense on disk command responses

iSCSI: control device major number 254 scsi15 : SFNet iSCSI driver

iSCSI:detected HBA host #15 iSCSI:

bus 0 target 0 = iqn.2006-01.com.openfiler:openfiler.test

iSCSI: bus 0 target 0 portal 0 = address

10.143.213.233 port 3260 group 1iSCSI: bus 0 target 0 established session #1, portal

0, address 10.143.213.233 port 3260 group 1

Vendor: Openfile Model: Virtual disk Rev: 0

Type: Direct-Access ANSI SCSI

revision: 04

SCSI device sda: 65536 512-byte hdwr sectors (34 MB)

iSCSI: starting timer thread at 11948918

iSCSI: bus 0 target 0 trying to establish session to

portal 0, address 10.143.213.233 port 3260 group 1

SCSI device sda: drive cache: write through

sda: unknown partition table

Attached scsi disk sda at scsi15, channel 0, id 0, lun 0

Attached scsi generic sg0 at scsi15, channel 0, id 0, lun 0, type 0

md: Autodetecting RAID arrays.

md: autorun ...

md: ... autorun DONE.Verifying that the discovery of target LUNs was indeed made:

raclinux1:~ # more /var/lib/iscsi/bindings # iSCSI bindings, file format version 1.0. # NOTE: this file is automatically maintained by the iSCSI daemon. # You should not need to edit this file under most circumstances. # If iSCSI targets in this file have been permanently deleted, you # may wish to delete the bindings for the deleted targets. # # Format: # bus target iSCSI # id id TargetName # 0 0 iqn.2006-01.com.openfiler:openfiler.test

Lets restart the linux-iscsi service again (without the debug this time):

raclinux1:/etc # rciscsi stop Stopping iSCSI: sync umount sync iscsid raclinux1:/etc # rciscsi start Starting iSCSI: iscsi iscsid fsck/mount done raclinux1:/etc # rciscsi status Checking for service iSCSI iSCSI driver is loaded running

Check what devices were discovered:

raclinux1:/etc # iscsi-ls ******************************************************************************* Cisco iSCSI Driver Version ... 4.0.198 ( 21-May-2004 ) ******************************************************************************* TARGET NAME : iqn.2006-01.com.openfiler:openfiler.test TARGET ALIAS : HOST NO : 18 BUS NO : 0 TARGET ID : 0 TARGET ADDRESS : 1.1.3923087114.0:0 SESSION STATUS : DROPPED AT Thu Apr 24 10:19:16 2008 NO. OF PORTALS : 1 Segmentation fault raclinux1:/etc # fdisk -l /dev/sda Disk /dev/sda: 33 MB, 33554432 bytes 2 heads, 32 sectors/track, 1024 cylinders Units = cylinders of 64 * 512 = 32768 bytes Disk /dev/sda doesn't contain a valid partition table

You can now partition the iscsi device using fdisk:

raclinux1:/etc # fdisk /dev/sda Device contains neither a valid DOS partition table, nor Sun, SGI or OSF disklabel Building a new DOS disklabel. Changes will remain in memory only, until you decide to write them. After that, of course, the previous content won't be recoverable. Warning: invalid flag 0x0000 of partition table 4 will be corrected by w(rite) Command (m for help): n Command action e extended p primary partition (1-4) p Partition number (1-4): 1 First cylinder (1-1024, default 1): Using default value 1 Last cylinder or +size or +sizeM or +sizeK (1-1024, default 1024): Using default value 1024 Command (m for help): w The partition table has been altered! Calling ioctl() to re-read partition table. Syncing disks. raclinux1:/etc # raclinux1:/etc # fdisk -l /dev/sda Disk /dev/sda: 33 MB, 33554432 bytes 2 heads, 32 sectors/track, 1024 cylinders Units = cylinders of 64 * 512 = 32768 bytes Device Boot Start End Blocks Id System /dev/sda1 1 1024 32752 83 Linux raclinux1:/etc # ls -l /dev/disk total 132 drwxr-xr-x 4 root root 4096 Apr 10 08:44 . drwxr-xr-x 33 root root 118784 Apr 24 10:19 .. drwxr-xr-x 2 root root 4096 Apr 24 10:21 by-id drwxr-xr-x 2 root root 4096 Apr 24 10:21 by-path raclinux1:/etc # ls -l /dev/disk/by-id total 8 ... .. iscsi-iqn.2006-01.com.openfiler:openfiler.test-0 -> ../../sda .. iscsi-iqn.2006-01.com.openfiler:openfiler.test-0-generic -> ../../sg0 .. iscsi-iqn.2006-01.com.openfiler:openfiler.test-0p1 -> ../../sda1 raclinux1:/etc # ls -l /dev/disk/by-path total 8 .. ip-10.143.213.233-iscsi-iqn.2006-01.com.openfiler:openfiler.test-0 -> ../../sda .. ip-10.143.213.233-iscsi-iqn.2006-01.com.openfiler:openfiler.test-0-generic -> ../../sg0 .. ip-10.143.213.233-iscsi-iqn.2006-01.com.openfiler:openfiler.test-0p1 -> ../../sda1 ...

In the meanwhile, lets look at the sessions on openfiler server:

[root@openfiler~]# cat /proc/net/iet/session tid:1 name:iqn.2006-01.com.openfiler:openfiler.test sid:564049469047296 initiator:iqn.1987-05.com.cisco:01.51f06557c68 cid:0 ip:10.143.213.238 state:active hd:none dd:none [root@openfiler~]# more /proc/net/iet/* :::::::::::::: /proc/net/iet/session :::::::::::::: tid:1 name:iqn.2006-01.com.openfiler:openfiler.test sid:564049469047296 initiator:iqn.1987-05.com.cisco:01.51f06557c68 cid:0 ip:10.143.213.238 state:active hd:none dd:none :::::::::::::: /proc/net/iet/session.xml :::::::::::::: <?xml version="1.0" ?> <info> <target id="1" name="iqn.2006-01.com.openfiler:openfiler.test"> <session id="564049469047296" initiator="iqn.1987-05.com.cisco:01.51f06557c68"> <connection id="0" ip="10.143.213.238" state="active" hd="none" dd="none" /> </session> </target> </info> :::::::::::::: /proc/net/iet/volume :::::::::::::: tid:1 name:iqn.2006-01.com.openfiler:openfiler.test lun:0 state:0 iotype:fileio iomode:wt path:/dev/openfiler/test :::::::::::::: /proc/net/iet/volume.xml :::::::::::::: <?xml version="1.0" ?> <info> <target id="1" name="iqn.2006-01.com.openfiler:openfiler.test"> <lun number="0" state= "0"iotype="fileio"iomode="wt" path="/dev/openfiler/test" /> </target> </info>

Adding all the discovered LUNs to openfiler's published iscsi targets:

[root@openfiler~]# more /etc/ietd.conf Target iqn.2006-01.com.openfiler:openfiler.test Lun 0 Path=/dev/openfiler/test,Type=fileio Targetiqn.2006-01.com.openfiler:openfiler.asm Lun 1 Path=/dev/openfiler/asm,Type=fileio Targetiqn.2006-01.com.openfiler:openfiler.ocr Lun 2 Path=/dev/openfiler/ocr,Type=fileio Targetiqn.2006-01.com.openfiler:openfiler.vote Lun 3 Path=/dev/openfiler/vote,Type=fileio [root@openfiler~]# service iscsi-target restart Stopping iSCSI target service: [ OK ] Starting iSCSI target service: [ OK ] [root@openfiler~]# more /proc/net/iet/* :::::::::::::: /proc/net/iet/session :::::::::::::: tid:4 name:iqn.2006-01.com.openfiler:openfiler.vote sid:282574492336640 initiator:iqn.1987-05.com.cisco:01.51f06557c68 cid:0 ip:10.143.213.238 state:active hd:none dd:none tid:3 name:iqn.2006-01.com.openfiler:openfiler.ocr sid:564049469047296 initiator:iqn.1987-05.com.cisco:01.51f06557c68 cid:0 ip:10.143.213.238 state:active hd:none dd:none tid:2 name:iqn.2006-01.com.openfiler:openfiler.asm sid:845524445757952 initiator:iqn.1987-05.com.cisco:01.51f06557c68 cid:0 ip:10.143.213.238 state:active hd:none dd:none tid:1 name:iqn.2006-01.com.openfiler:openfiler.test sid:1126999422468608 initiator:iqn.1987-05.com.cisco:01.51f06557c68 cid:0 ip:10.143.213.238 state:active hd:none dd:none :::::::::::::: /proc/net/iet/session.xml :::::::::::::: <?xml version="1.0" ?> <info> <target id="4" name="iqn.2006-01.com.openfiler:openfiler.vote"> <session id="282574492336640" initiator="iqn.1987-05.com.cisco:01.51f06557c68"> <connection id="0" ip="10.143.213.238" state="active" hd="none" dd="none" /> </session> </target> <target id="3" name="iqn.2006-01.com.openfiler:openfiler.ocr"> <session id="564049469047296" initiator="iqn.1987-05.com.cisco:01.51f06557c68"> <connection id="0" ip="10.143.213.238" state="active" hd="none" dd="none" /> </session> </target> <target id="2" name="iqn.2006-01.com.openfiler:openfiler.asm"> <session id="845524445757952" initiator="iqn.1987-05.com.cisco:01.51f06557c68"> <connection id="0" ip="10.143.213.238" state="active" hd="none" dd="none" /> </session> </target> <target id="1" name="iqn.2006-01.com.openfiler:openfiler.test"> <session id="1126999422468608" initiator="iqn.1987-05.com.cisco:01.51f06557c68"> <connection id="0" ip="10.143.213.238" state="active" hd="none" dd="none" /> </session> </target> </info> :::::::::::::: /proc/net/iet/volume :::::::::::::: tid:4 name:iqn.2006-01.com.openfiler:openfiler.vote lun:0 state:0 iotype:fileio iomode:wt path:/dev/openfiler/asm tid:3 name:iqn.2006-01.com.openfiler:openfiler.ocr lun:0 state:0 iotype:fileio iomode:wt path:/dev/openfiler/asm tid:2 name:iqn.2006-01.com.openfiler:openfiler.asm lun:0 state:0 iotype:fileio iomode:wt path:/dev/openfiler/asm tid:1 name:iqn.2006-01.com.openfiler:openfiler.test lun:0 state:0 iotype:fileio iomode:wt path:/dev/openfiler/test :::::::::::::: /proc/net/iet/volume.xml :::::::::::::: <?xml version="1.0" ?> <info> <target id="4" name="iqn.2006-01.com.openfiler:openfiler.vote"> <lun number="0" state= "0"iotype="fileio"iomode="wt" path="/dev/openfiler/vote" /> </target> <target id="3" name="iqn.2006-01.com.openfiler:openfiler.ocr"> <lun number="0" state= "0"iotype="fileio"iomode="wt" path="/dev/openfiler/ocr" /> </target> <target id="2" name="iqn.2006-01.com.openfiler:openfiler.asm"> <lun number="0" state= "0"iotype="fileio"iomode="wt" path="/dev/openfiler/asm" /> </target> <target id="1" name="iqn.2006-01.com.openfiler:openfiler.test"> <lun number="0" state= "0"iotype="fileio"iomode="wt" path="/dev/openfiler/test" /> </target> </info>

In the meanwhile, On the initiator:

Now, let us check the devices detected (the iscsi-device command works more reliably):

raclinux1:/etc # iscsi-device /dev/sda /dev/sda: 0 0 0 10.143.213.233 3260 iqn.2006-01.com.openfiler:openfiler.test raclinux1:/etc # iscsi-device /dev/sdb /dev/sdb: 0 1 0 10.143.213.233 3260 iqn.2006-01.com.openfiler:openfiler.asm raclinux1:/etc # iscsi-device /dev/sdc /dev/sdc: 0 2 0 10.143.213.233 3260 iqn.2006-01.com.openfiler:openfiler.vote raclinux1:/etc # iscsi-device /dev/sdd /dev/sdd: 0 3 0 10.143.213.233 3260 iqn.2006-01.com.openfiler:openfiler.ocr

raclinux1:/etc # fdisk -l /dev/sd* Disk /dev/sda: 33 MB, 33554432 bytes 2 heads, 32 sectors/track, 1024 cylinders Units = cylinders of 64 * 512 = 32768 bytes Device Boot Start End Blocks Id System /dev/sda1 1 1024 32752 83 Linux Disk /dev/sda1: 33 MB, 33538048 bytes 2 heads, 32 sectors/track, 1023 cylinders Units = cylinders of 64 * 512 = 32768 bytes Disk /dev/sdb: 64.4 GB, 64424509440 bytes 64 heads, 32 sectors/track, 61440 cylinders Units = cylinders of 2048 * 512 = 1048576 bytes Disk /dev/sdb doesn't contain a valid partition table Disk /dev/sdc: 64.4 GB, 64424509440 bytes 64 heads, 32 sectors/track, 61440 cylinders Units = cylinders of 2048 * 512 = 1048576 bytes Disk /dev/sdc doesn't contain a valid partition table Disk /dev/sdd: 64.4 GB, 64424509440 bytes 64 heads, 32 sectors/track, 61440 cylinders Units = cylinders of 2048 * 512 = 1048576 bytes Disk /dev/sdd doesn't contain a valid partition table raclinux1:/etc # more /var/lib/iscsi/bindings # iSCSI bindings, file format version 1.0. # NOTE: this file is automatically maintained by the iSCSI daemon. # You should not need to edit this file under most circumstances. # If iSCSI targets in this file have been permanently deleted, you # may wish to delete the bindings for the deleted targets. # # Format: # bus target iSCSI # id id TargetName # 0 0 iqn.2006-01.com.openfiler:openfiler.test 0 1 iqn.2006-01.com.openfiler:openfiler.asm 0 2 iqn.2006-01.com.openfiler:openfiler.vote 0 3 iqn.2006-01.com.openfiler:openfiler.ocr

************************************************************************************************

Caveat:

Somehow, the iscsi-ls utility was not working. However, the devices were accesible all right.

Instead, the iscsi-device command works beautifully.

************************************************************************************************

raclinux1:/etc # iscsi-ls ******************************************************************************* Cisco iSCSI Driver Version ... 4.0.198 ( 21-May-2004 ) ******************************************************************************* TARGET NAME : iqn.2006-01.com.openfiler:openfiler.test TARGET ALIAS : HOST NO : 20 BUS NO : 0 TARGET ID : 0 TARGET ADDRESS : 1.1.3923087114.0:0 SESSION STATUS : DROPPED AT Thu Apr 24 10:43:41 2008 NO. OF PORTALS : 1 Segmentation fault raclinux1:/etc # echo $? 139

Conclusion..

This proves that linux-iscsi package can be made to work on 2.6.5-7.x distributions or for any other linux distribution less than 2.6.14. So if open-iscsi was not meant to compile on your distribution, do not despair. There are other avenues. This article also serves to demonstrate how to use the command line interface of the openfiler product better, as compared to the GUI console.

It also professes that there are some caveats in openfiler, but if we know our way around them, life is good.